Edge AI Breaks When Everyone Has to Wait

Many edge-AI problems are described as bandwidth problems. That is true, but incomplete. In practice, the more corrosive bottleneck is synchronization: vehicles disconnect, edge devices drift in and out of range, and the clean round structure of synchronous federated learning starts to feel like a fantasy.

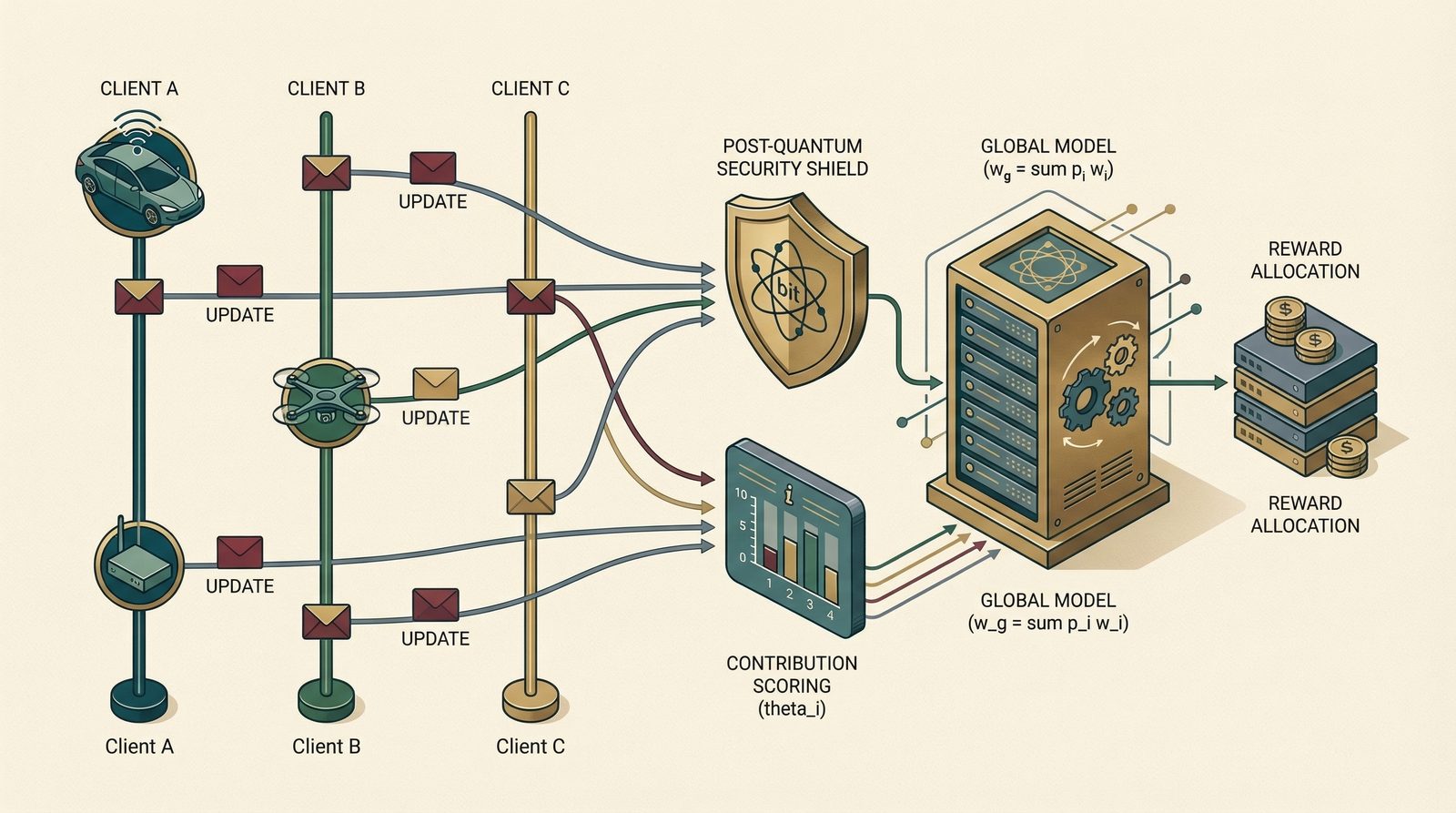

That is why I think BFL-MEC is more interesting as a coordination paper than as yet another “blockchain + federated learning” paper. Its strongest idea is that once the environment is genuinely mobile, three design choices can no longer be separated: how updates arrive, how trust is established, and how rewards are assigned.

The design question

If updates arrive late, disappear, and reappear, why should every gradient count equally and why should every client be rewarded equally?

The paper's real move is architectural: treat asynchrony, trust, and incentives as one problem rather than three patches.

Asynchrony changes the learning rule

The local update is still familiar stochastic gradient descent. The novelty is not in the client-side step itself; it is in what happens when many such steps arrive out of sync.

Local client update

$$w^i \leftarrow w^i - \eta \nabla \ell(w^i; b).$$

In synchronous FL, this line hides a social contract: everyone pauses, everybody contributes to the next round, and stale information is limited by protocol. In edge settings, that contract collapses. Some clients have fresh information, some are delayed, and some may vanish before the round metaphor even makes sense.

BFL-MEC therefore replaces naive averaging with contribution-weighted aggregation, so that stale or weakly useful updates do not receive the same authority as highly aligned ones.

Contribution-weighted aggregation

$$w_g \leftarrow \sum_{i=1}^{n} p_i w^i,\qquad p_i = \frac{\theta_i}{\sum_{k=1}^{\lambda n}\theta_k}.$$

The elegance here is that the aggregation rule already begins to answer the incentive question. If some clients systematically move the global model in a more useful direction, the system has a principled reason to give them more influence.

Delay is not a nuisance variable

Asynchronous learning is not free. It buys flexibility by introducing staleness, and the paper is careful not to hide that fact. It explicitly defines average delay so the convergence discussion has a systems quantity to hold onto.

Average delay

$$\tau_{\mathrm{avg}}^{i}=\frac{1}{T_i}\left(\sum_{t:j_t=i}\tau_t+\sum_k\tau_k^{C_T,i}\right).$$

I like this because it prevents the blog-post version of the idea from sounding too magical. “Asynchronous” is not just a nice word for flexibility. It is a measurable change in the optimization environment. Once delay enters the picture, the learning rule, the proof story, and the deployment story all need to acknowledge it.

Security cannot be bolted on afterward

The post-quantum angle in this paper is easy to dismiss as a side quest. I do not think it is. Once the system is decentralized and highly mobile, identity verification becomes part of the hot path. If your trust mechanism is slow, your elegant asynchronous design still stalls in practice.

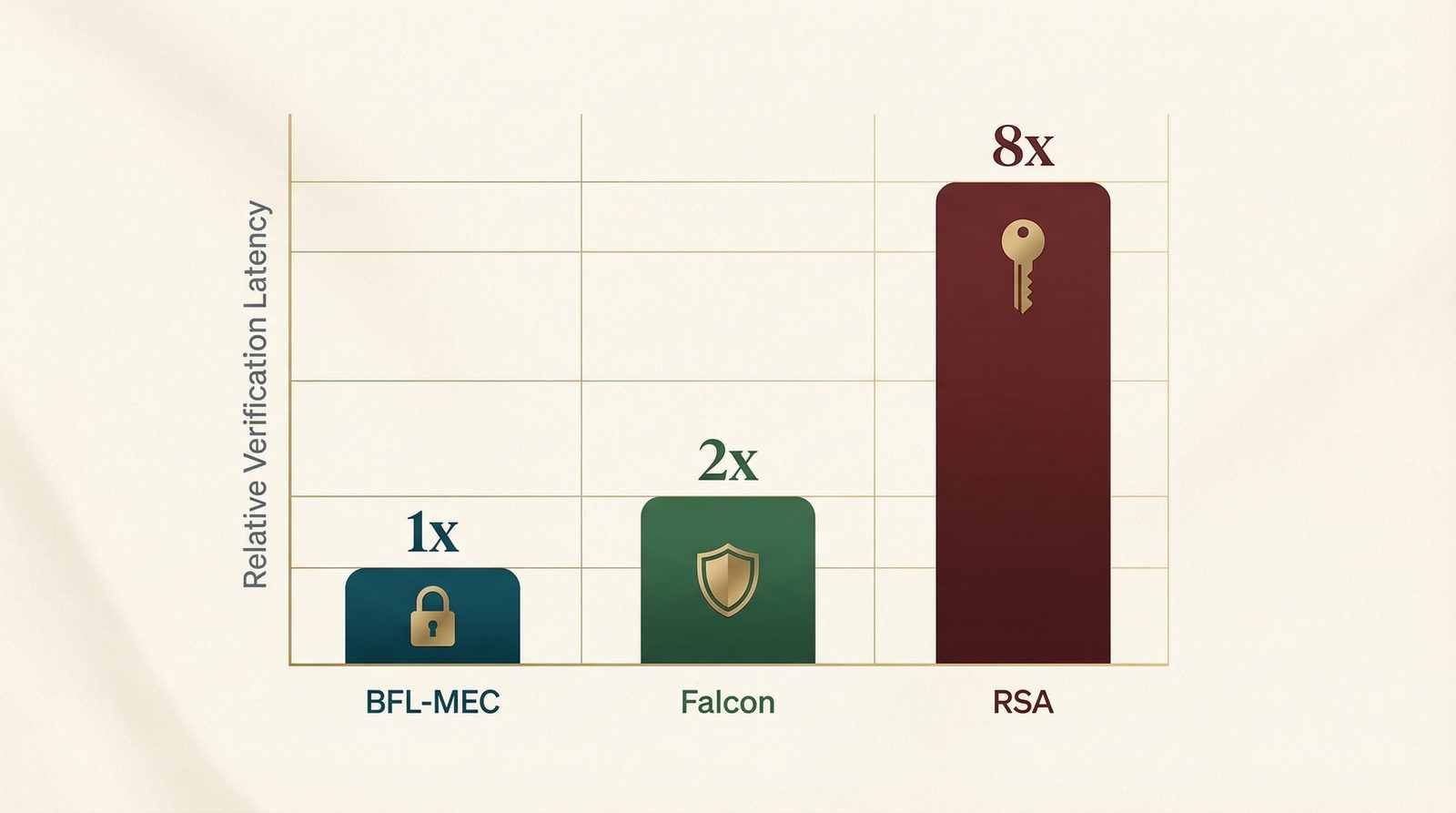

The paper reports that the proposed cryptographic path is substantially faster than RSA and Falcon while remaining post-quantum oriented.

That is why the paper's security choice matters architecturally. Post-quantum verification is not introduced here as ornamentation. It is introduced because trust must survive future cryptographic shifts without turning the coordination path into molasses.

Reward follows usefulness

$$\mathrm{reward}_i = \mathrm{base}\cdot \frac{\theta_i}{\sum_{k=1}^{\lambda n}\theta_k}.$$

This equation is deceptively small. It says that usefulness to the global model, not attendance, should determine economic treatment. That is a strong systems stance, because it aligns the optimization layer and the incentive layer instead of letting them drift apart.

What I still think is worth debating

- How to define usefulness. Contribution scores are practical, but edge systems may care about safety, calibration, or local specialization, not just global alignment.

- How much staleness is acceptable. An asynchronous system never fully escapes the question of delayed influence; it only manages it better.

- How much cryptography the edge can really afford. A secure path that is theoretically elegant but operationally heavy is still the wrong tool for many verticals.

The takeaway I keep returning to is this: in mobile edge AI, synchronization is not a side constraint. It is the design surface. Once you accept that, aggregation, incentives, and verification all start to look less like add-ons and more like one coordinated rule.

Primary source: arXiv.